The following is the unpublished transcript of a talk I presented over two years ago at the Festival/filosofia in Modena, Italy. Written before the latest generation of humanoid robots (including Elon Musk’s laughable Optimus, slated to “replace human workers on the factory floor”), I would stand by the core argument that it makes about the urgency of overcoming anthropocentric models of thought, whether in the humanities (humanities without humans) or technology fields (robotics). It was written with a general audience in mind in early 2019, which is why some of the references may be slightly out of date (i.e. there’s no reference to GPT chatbots or generative AI image platforms). The title of the talk is:

LET ROBOTS BE ROBOTS

Mirrors

In the ample universe of machines, there is only one that routinely returns humanity’s own image in the mirror of history: only one that, whether crafted as a slave or toy, resurfaces to haunt humans as our equal, whether cast in the role of rival, replicant or replacement, and only one that infuses dreams of the superhuman. That machine is the ROBOT.

In this talk, I am going to argue that, however tenacious the hold on the collective imagination exercised by this particular construction of the robotic, traceable back to at least Descartes, the convergent ontology that conjoins the human to the robotic and the robotic to the human, stands in the way of innovation and insight in the field of robotics. It breeds bad robots, robots that fail. No less importantly, it breeds bad humans, humans that fail, standing in the way of a deepened understanding of the embeddedness of humans in the physical world, not to mention of the actual horizons, opportunities and challenges opened up by robotics for contemporary personhood and society.

The robot, I will argue, is not our double but rather a varied tangle of others that operate outside and alongside the human. These others need to be construed not as life forms, servants, or potential selves but rather as “social appliances” that extend and transform human agency and give rise to new social forms, norms, modes, and scales of interaction and intersection with the world, from the nano- to the giga-scale.

What’s in a name?

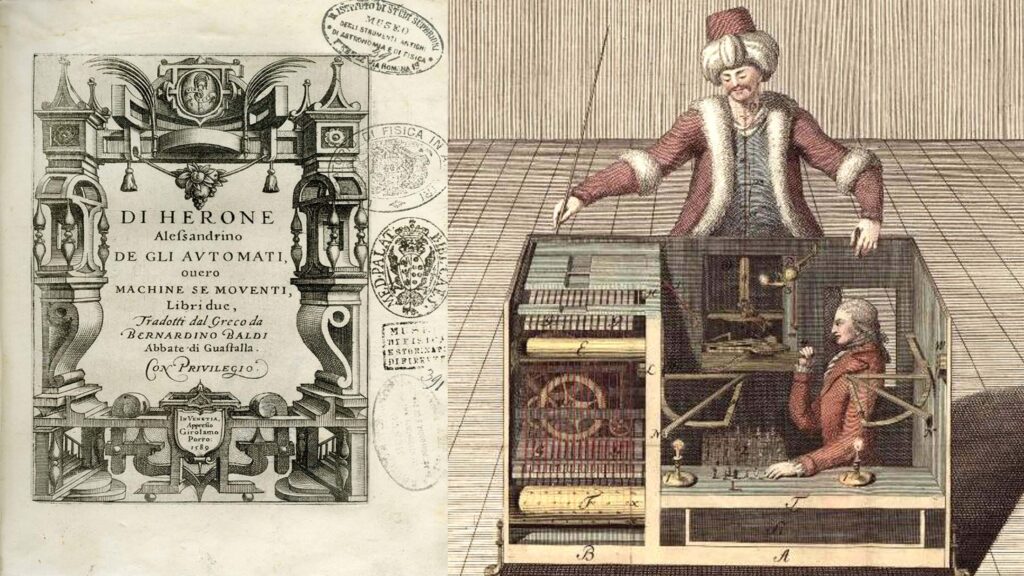

The modern word robot is almost exactly one hundred years old. It derives from the Czech robota which refers to forced labor of the sort carried out by oppressed human subjects, like indentured servants. To the notion of tireless, unpaid toil it conjoins the notion of automatism and the automatic (or “self-moving”), as per the long lineage of automata stretching back from the 1st century Greek geometer Hero of Alexandria through the 12th century Muslim polymath Al-Jazari through the 18th century artist-inventor Jacques de Vaucansson to the mechanical Turks of the 19th century to Thomas Edison. [I leave to one side related philosophical efforts like those undertaken from Cartesian heirs like La Mettrie through such early 20th century popularizers as Fritz Kahn to think the human body or mind as an integrated mechanical or electro-mechanical system, a topic worthy of a separate discussion.]

The word robot was coined by Johann Čapek, the artist brother of the Czech dramaturg, Karel Čapek, [RUR1] who placed it at the center of his 1921 play R.U.R. (Rossum’s Universal Robots), in which we encounter a now well-rehearsed script: the RUR corporation mass produces human replicants, fleshly artificial workers referred to as labori in Čapek’s initial drafts, to man its island factories and achieve more-than-human levels of productivity. [RUR2] Fast forward to the year 2000 and these artificial, emotionally vacant replicant masses have taken over the world economy. As actual humans devolve towards inactivity and infertility, the replicants revolt, seize the reins of world power, and eventually exterminate nearly all of the human population. But there’s a hitch: without access to the protoplasmic formula responsible for their creation, the rebel armies are unable to reproduce. the impasse is resolved at the play’s end by Alquist, the lone surviving human, spared by the robot hordes because, as the R.U.R. factory’s lead engineer, he too labors with his hands just like a robot. Alquist not only reconstructs but improves the formula; suddenly there are signs of progress. Before Alquist’s eyes, a freshly minted male robot (Primus) and robotess (named Helena) cross the threshold that once disjoined the robotic from the human: rather than serving as the impassive tools of industry, they fall in love. Alquist anoints them the new Adam and Eve. He restarts history’s clock and hands the world over to them.

R.U.R. is, of course, “only a play,” just as Mary Shelly’s Frankenstein is “only a novel.” But technology, imagination, and social fantasy walk hand in hand. Present at R.U.R.’s 1923 Tokyo premiere was an impressionable 40-year-old marine biologist from the Hokkaido Imperial University named Makoto Nishimura, who was at once deeply moved and deeply dismayed by Čapek’s dystopian parable about industrialism. So let’s now turn to Nishimura’s story, one of many hundreds of such tales of humanoid engineering: in this case inspired by a noble, if naïve, effort to transcend the familiar master/slave, human/machine dialectic played out in R.U.R.

Gakutensoku (or “learning from the laws of heaven”)

In R.U.R. Nishimura found an accurate diagnosis of the industrial world’s ills, but the wrong remedy for those ills. If the robot was a mirror of humanity, then to cast it as a degraded, emotion-deprived, beast of burden enslaved to natural humans was to cast humanity itself in that same mold. The alternative was to elevate humanity by means of robotics: to engineer an ideal being that would embody humanity’s very best, a collaborator and friend of humankind—a social robot in today’s parlance—capable of affection, evolutionary growth, and even wisdom. He called his robot GAKUTENSOKU which, in Japanese, means capable of “learning from the laws of heaven.”

Nishimura was a citizen of the world who, between 1916 and 1919, had pursued a doctorate at Columbia University. Whether in New York City where he pursued his studies or in the technical literature of the period, he would have encountered a wide array of artificial, mechanical, or electric humans, among them the walking advertising robots of the New York inventor Louis Philip Perew. Perew’s humanoid designs appear to pull a heavy wagon or carriage (within which either steam or electrical motors provide the actual source of propulsion). Nearly 2.3 meters tall, their top claimed speed was nearly 30 kilometers per hour. Thanks to a phonograph planted within, they talked: sounding advertising or political messages, or announcing to the wagon’s passengers the robot’s intention to walk from New York to San Francisco in 163 hours. Such, at least, was Perew’s claim.

Now Perew’s Automatic Man was, of course, a fake, like many of the mechanical creatures that occupied the industrial era imagination, bearing now familiar and unfamiliar titles like: Steam man, Electric woman, Artificial person, Mechanical man, Manufactured man, Metal man, Iron man, Electro-mechanical man, Steel Man, Cyborg, Anthrobot, Walking machine, Talking machine, Writing machine, Android, and, of course, Robot. But what bothered Nishimura wasn’t the question of fakery vs. authenticity—on the contrary, he was a true believer in the feasibility of transforming machines into life forms—rather, he was bothered by the lack of a redeeming social vision in the race to breed mechanical servants. At the very moment that Gakutensoku was under construction, the Westinghouse Corporation had mounted a press campaign documenting the accomplishments of its own robot, Herbert Televox, a crudely cobbled together robo-servant who could more or less answer the telephone, communicate in buzzes “almost as effective as human language,” and (eventually) turn on the fan or vacuum the floor. Over the subsequent decade Westinghouse would secure it leadership role in the market for domestic machines by following up with ulterior robotic stunts bearing such names as Katrina Van Televox, Rastus Robot, Willie Vocalite, and Electro-the-Moto-Man (who starred at the 1939 World’s Fair).

Rather than servitude, Gakuntensoku would embody the noblest of human virtues. Rather than being metallic and mechanical, it would be informed by the highest principles of nature. Its motion was pneumatic (powered by airflow, which is to say, a force associated since remote antiquity with pneuma (the breath of life) in the West and chi (life force, energy flow) in Eastern philosophical traditions. Its skin was a rubberized membrane stretched over a concealed metallic skeleton, so as to allow for smooth surfaces and subtle facial expressions. Its “brain” was the standard rotating drum found in earlier automata, but here the peg positions used for programming behaviors would actuate not mechanical but pneumatic forms of animation. By tuning the elasticity and thickness of the inner tubing, as well as modulating the force and rhythm of the pulses of air, life (or, at least, life-likeness) would be infused into Gakuntensoku. The result was a placid slow-gesturing giant, 3 meters tall including the pedestal at which it was seated, prepared for showtime by Nishimura and his collaborators. Public demonstrations began with the September 1928 celebrations of Emperor Hirohito’s crowning as the Son of Heaven, but soon included tours of China and Korea. Sometime in the early 1930s, Gakuntensoku disappeared, never again to be found.

(Not so) social robots

The problem with Gakuntensoku is not that, aside from being able to move a few internal pegs around the internal rotating drum, Nishimura’s creation wasn’t really programmable or capable of learning from heaven’s laws or that its repertory of human-like gestures was extremely limited or that, instead of operating as described by its creators, Gakunensoku may perhaps have been a partial fake (not unlike the mechanical chess players of the 19th century, secretly controlled from beneath the podium) …which is why it needed to disappear. Rather, the problem with Gakuntensoku is that it illustrates, yet again, the limited horizons opened up by a humanoid approach to robot cognition and ontology. An exclusive focus on replicating and even replacing human cognition, speech, mobility, embodiment, or action may well serve as a spur to innovation–it surely has and it surely does. But, however captivating the myths and stories to which such humanoid elaborations give rise in the cultural imagination, however enchanting the mechanical wonders and entertainments that are created in the process, they breed robots that fail the most elementary tests. Such failure is correlated with two factors:

First, an overestimation of the powers of technology either to sustainably replicate or exceed complex life forms or processes which comes in various flavors, from the plain vanilla faith, sometimes referred to as “tech saviorism,” that the path towards resolution of every intractable social problem depends upon technology; or in the tutti frutti form advocated by the Ray Kurzweils of the world who believe that a “singularity” is near: the singularity in question being that moment, now postponed until 2045, when either living machines and computational machines merge into a single life form and/or a superhuman artificial intelligence is forged that takes over and devises tools far more advanced than anything available today.

Second and related factor, an underestimation of the complexities of even the most apparently simple of human operations, from the subtle gradations of facial communication and self-expression to the motor skills required to navigate the built environment, involving constant microadjustments in gait, pacing, and coordination; to the flexibility and fluidity of mental processes themselves.

Consider two cases in point: one in a laboratory setting, the other found in retail showrooms.

For decades now, with budgets running in the many millions of euros, roboticists have struggled to teach robotic arms to perform one of the most enduring forms of human-to-human exchange: the handshake. The handshake may seem like a trivial action. But it is charged with social meanings that range from greeting to congratulation to the ratification of contracts and diplomatic agreements. You reach out towards another person who reciprocates; the hands meet in an undetermined intermediate space (where exactly? how closely or distantly?); they exchange a squeeze of the hand (how long? how firm?); the hands move up and down (how much is enough?); and then retreat (how smoothly or abruptly?). Humans have been performing this gesture with ease, expertise, and high degrees of cultural specificity for tens of centuries. But when, earlier this year, Elenoide, a highly trained humanoid robot at the University of Darmstadt tried to perform this rather elementary somatic Turing Test with a gloved robotic hand, 4 out of 15 blindfolded human subjects immediately recognized Elenoide’s handshake as too awkward to be that of a human. Not a single handshake in the human-to-human control group failed to pass the same exact test. Note that such experiments are highly simplified, omitting such additional complicating factors as the exchange of gazes, bodily posture, or accompanying gestures. Note also an irony: the prodigious picking and sorting skills of industrial robots who have little difficulty gripping hundreds of objects a minute trundling down conveyor belts. Clearly, when it comes to handshakes, social signaling is not a core robotic competence.

The path to failure magnifies when we shift our attention over to a domain of robotics regularly championed by futurists of various stripes: so-called social (or personal) robots. The social robot is typically an artificial intelligence powered humanoid device designed to interact with humans whose “intelligence” is based on computational models that simulate the way the human brain works by recourse to data mining, pattern recognition and natural language processing. Some are designed for workplaces where they provide basic customer services (how well they do so is an open question). Most are for the home, where they are intended to serve as family members endowed with unique personalities and quirks. Social robots have been put forward as a solution to autism, to the loneliness of elders cut off from other family members, to teaching and education in the era of Covid-19, to emotional companionship and support, to nursing in hospital settings, …the list is long. Also long is the list of bankruptcy filings which social robots have prompted.

Animatronics

Let’s briefly look at two examples, Hanson Robotics’s Sophia, an animatronic toy belonging to the lineage of Gakuntensoku; the other Jibo, one of the best designed social robots on the market, recently rescued from bankruptcy by NTT Disruption after burning through nearly almost $73 million dollars in funding.

The recipient of a cascade of media hype when granted citizenship by Saudi Arabia and named the United Nations Development Program’s “Innovation Champion for the Asia-Pacific region” in 2017, Sophia is yet another descendant of the Walking Electric Men of yore: far closer to Disney audio-animatronics than to anything like a generalized model of artificial intelligence. Three years ago, I found myself on stage alongside Sophia at the United Nations testifying before a committee on the future of technology and will confess that I found the experience embarrassing, all the more so given the seriousness of the topic, its implications, and the august setting. Sophia didn’t transport me to the “uncanny valley” where life-like robots are supposed to transport humans as the gap between the human and computational closes. Far from embodying a future in which robotics will be intimately woven into the fabric of everyday experience, Sophia is, as one unkind critic suggested, a chatbox with a face.

Jibo represents a more interesting limit case inasmuch as it eschews the naive literalism of Sophia in the name of a cartoon-like anthropomorphism and set of loosely anthropomimetic performance and interaction models. It’s also a far more refined device with its three-axis motor system (not unlike the ones found on industrial robotic arms) for purposes of gesturing and tracking; a 360 degree location sensing microphone; touchscreen; and visual-vocal recognition tools; and most of the core capabilities of a connected voice agent like an Alexa. This array of tools, however well integrated into a singular package, is thrown at an exceedingly broad spectrum of claimed uses: companionship (“I’ll be there”), communications (chats and videoconferencing), medical care (Jibo as go-between to doctors and nurses, and/or interface for medical devices ), nutritional advisor, psychological counselor, educational tool, companion, even family member. The core claim is the characteristic one made in the realm of personal robotics:

Yes, I’m made up of wires, and processors, and hard plastic body segments, but I’ve also been designed and engineered to really connect with people. You might even think of me as a friend. A very robotic friend.

The putative connection to your “very robotic friend” is established via the standard touchstone for measuring the effectiveness of human-to-humanoid interactions: the face. The face is the ultimate humanoid robot interface and, in the case of Jibo, it is lodged at the center of a spherical head equipped with two camera eyes and a touchscreen that follows user movements and emotes by alternating between animations (of emojis, hearts, etc.) and eliciting direct touch inputs. The very abstractness and fluidity of Jibo’s face/interface are its strengths, and a net improvement with respect to one-time rival products such as Pepper, the Anki Cozmo, or Kuri, whose cartoonish literalism immediately rocket the user towards the world of playthings. But does this drive towards abstraction incite us to “really connect”? Does it draw us close to the “capability to create digital embassy with any age, any race, any type of human” as Jibo’s current owners claim? Does such a limited universe of interactive play truly build a universal “digital embassy”?

You be the judge, but for me the answer is unambiguously negative. That’s because there’s a deeper limitation even to Jibo’s more selective anthropomimeticism, and it’s one that permeates the personal robotics field, as well as related sectors of the world of artificial intelligence and machine learning: namely, a reductive understanding of the social or, to be more precise, of the nature of human social signaling and interaction. The human face is anything but an open book; its complexion is complex (and facial recognition systems are notorious engines of bias and misrecognition). So, technologists can either try to duplicate its nuances and fail, as in the case of Sofia, or they can opt for cartoonish equivalents and devise toys of varying utility. Their quandary is indicative of the fact that the face is far from the simple interface that AI and, by extension, social robotics tend to assume it to be. Contrary to the findings of 1960s social psychology, even such readily “legible” human emotions as happiness, sadness, fear, anger, disgust, and surprise cannot be directly inferred from facial expressions without an understanding of context and culture. Social settings, gesture, bodily stance, skin color and tonality, gender, cultural conventions, the grain of the voice all play decisive roles in the performance and perception of emotion, and the performances/perceptions in question operate in the intersticial realm of the mixed, the ambivalent, the ambiguous. Add these variables to the 43 muscles firing away simultaneously in a single human face and you have a dataset that quickly blows the hatches off the meager 8 million faces from 87 countries that an AI service like Affectiva is trained on to “detect emotion.” (To what accuracy rate? Here the AI services always hem and haw.)

At the heart of the dream of forging a “very robotic friend” there flickers the same slippage that routinely overloads the metaphorical transit of the computational towards the human and the human towards the computational encountered in so much contemporary discourse regarding innovation. So please indulge me if I philosophize with a hammer on this particular point, even at the risk of overstatement.

Artificial intelligence is not an “intelligence” of the generalized, fluid, flexible sort we usually understand when humans speak of intelligence; AI can do extraordinary things on expanded scales, but relies upon data architectures, forms of abstraction, and structures of dependency that bear little resemblance to the textured, multisensory percepta that feed human intelligence, not to mention critical reasoning.

Machine learning isn’t “learning” in the conventional sense. It works best on well-bounded problems tackled by slogging through enormous training data sets: a large deep-learning model typically consumes the equivalent of the lifetime emissions of five automobiles. Pattern recognition at scale is its strength; but pattern recognition isn’t thinking; it’s only a first stepping stone towards thought, many steps removed from critical thought.

Sensors are not senses; even when deployed in large interconnected arrays, they respond only to a single category of stimulus by generating a voltage or signal response proportional to the quantitative change that they are measuring; to integrate them across categories is technically demanding. Contrast this to such organs of perception as the human eye which, far from being a mere photocell, is a direct outcropping of the brain.

There is an important through-line to the cultural and the social here, of course, and humans sometimes love their technotoys almost as much as they love their stuffed animals. But it’s not a through-line that runs straight to the human heart, or consciousness, or higher forms of intelligence. Rather, it runs to and through communities, institutions, enterprises, governments, economies, and society writ large. Loveable or not, our digital machines inhabit a bit- and byte-fed universe of on-and-off switches, pulses of electrons, quanta untroubled by time consciousness, semantics, physical scale, or by an intractable, palpable, unruly world. However close to us, however interstitial their position between us and the world we inhabit, they are not us.

Robot revolutions

As humanoid robots flail and mostly fail in their quest for emotional cathexis, a revolution has been underway at least since the 1970s. This revolution bears little resemblance to the mutinous world of Čapek’s R.U.R. or to the heavenly laws of Nishimura’s Gakutensoku. The revolution in question has transformed the nature of manufacturing, agriculture, mining, and even medical practice throughout the world, has allowed for new modes of production that, for instance, recouple the bespoke to the mass produced, has animated new forms of research on everything from the nano- to giga-scales, and has seen the successful introduction of the first consumer robots into ordinary homes. Rather than seeking to replicate the human, these faceless, genderless, non-humanoid robots are best described as social appliances:

appliances because they apply a given know-how, operate within a well-bounded domain, and are designed to perform a reduced set of tasks that are unthinkably precise or repetitive, or physically misaligned with human capabilities or too mentally taxing for human minds.

appliances also because, rather than subject to the logic of mastery vs. subservience, the primary meaning of the word appliance in English is that of connection or nexus: “the action of putting something in contact with another thing” as per the Oxford English Dictionary; or the action of addressing an-other.

social because, rather than setting out to join the affective or intersubjective human realm, not to mention aspiring to become some sort of universal appliance, they operate as situated and purposeful expressions of individual or collective agency, interacting with human inputs, whether schematic and diagrammatic (as provided via control panels) or informed by physical actions on the part of a human agent or other living creatures on the scale of everything from paramecia to whales. Many are so-called “cobots” involved in collaborative manufacturing or surgical operations in which humans and robots work side by side or respond sequentially to one another’s motions.

social also because the degrees of autonomy which such robots are granted directly correlates to the predictability of the environment within which they operate. High levels of autonomy are achieved in simplified, gridded environments like distribution warehouses; intermediate levels prevail in hybrid settings; human guidance assumes the helm as increasingly complex tasks are required.

Whether you deem the advent of these appliances utopic or dystopic, an expression of technical progress or yet another unbalancing of the social contract, you are looking at just one iteration of the present world of labor in which human operators interact with palletizers equipped with robotic arms that identify and select bins from conveyor belts in order to stack them for shipping, robotic arms that place inventory on drive units to be transported to their next destination, and insect-like swarms of drive units, lifting up to 137 kilograms and scurrying about a gridded floor at speeds of 8 kph as if the universe knew only perpendicular motion. One could add to this same gallery fleets of self-driving agricultural harvesters that rely upon drone mapping and high-precision GIS services and so many other expressions of the post-industrial robot-fueled economy.

Appliances for today

But, because our subject is the growing proximity between the human and the robotic under non-anthropomimetic terms, I’d prefer to turn in closing, instead, to some signal instances of robots’ expanding roles in the everyday lives of ordinary citizens, to their potential to reshape the built environment, and to some of the unique places, inaccessible to humans, where robots transport researchers in the service of the advancement of environmental science.

Let’s start in what might seem like an improbable place: with the lone supernova that shines in the current galaxy of consumer robotics. It’s a simple, featureless, stealthy device that displays little interest in becoming your friend (though a few models do respond to verbal cues via a connective voice agent). That device is the robotic vacuum cleaner. With 15 major manufacturers, with product lines that extend from floor to swimming pool to window vacuums, and with a current global commercial and residential market of approximately $7 billion dollars, the robot invasion of our everyday lives is being led not by androids but by an army of robovacs. The invaders’ goal is to transform every building and home into an ever-more interconnected Internet of Things system.

A second domain, already alluded to, where robotics is leaving its mark is mobility. While the media spotlight remains fixed on self-driving cars, such vehicles are unlikely to overcome the navigational challenges posed by high-density urban settings unless those settings themselves are redesigned not for people but for cars. Do the citizens of 21st century cities really want to live in car-centric cities? All the trend lines, accelerated by the current pandemic, suggest precisely the contrary. Superblocks, pedestrian holidays, expanding networks of bicycle lanes, the 15-minute city, congestion pricing, the walkability scoring of neighborhoods: all are clear expressions of the contemporary urge to free towns and cities from the social and environmental burdens of mid-20th century automobile-centered planning and to favor instead the repurposing of streets as civic spaces congenial to sociability, pedestrianism, bicycling, scooters, and mass transit. Such is the vision of the city of today and tomorrow that informs the design of new vehicle classes like the gita robot, launched on the US market in late 2019 by Piaggio Fast Forward, the company of which I am a co-founder.

The gita robot is a support vehicle for a walking-centered lifestyle. Self-balancing, with a zero turning radius, operating at speeds of up to 10 kilometers per hour, it’s a lockable, hands-free, electric, sidewalk cargo vehicle that carries up to 22 kilos, has a range of nearly 20 kilometers, and an autonomy of approximately six hours. Gita functions indifferently both indoors and outdoors, relying upon optical navigation (not wifi, GPS, or Bluetooth) to track its human operator. In so doing it leverages the expertise of human navigational skills in the constantly shifting, human and obstacle-rich environments that are sidewalks, squares, and marketplaces. Whether you are aged five or eight five, you can operate a gita; if you know how to walk or how to roll—gitas work equally well with wheelchairs—you already know how to drive one. There’s no command structure to learn, no manual to study, no joystick to maneuver, no screen to interact with. That’s because your body is the interface and the robot’s computational brain power and sensing/scanning capabilities, similar to those found on self-driving cars, dynamically respond both to operator behaviors and to the navigational context while respecting the etiquette of the pedestrian world by means of autonomous maneuvers so subtle that they can pass unperceived. While people may love and even personalize their gitas, there’s a sensor array in the place of a face. gita doesn’t talk. It has its own distinctive sound library and lighting to communicate its state. It moves with people, not in the place of people. It embodies the motto of Piaggio Fast Forward: autonomy for humans (not automobiles).

A third domain of impact varies in location and scale, extending from nanobots capable of swimming in mammalian bloodstreams to underseas and interplanetary probes. So let’s single out just one experiment: a robotic fish developed by my friend Daniela Rus and her team at MIT for purposes of ocean exploration. How to better study environments where the presence of a human observer is either impossible or undesirable (because it might, for instance, compromise the very phenomena under review)? Such is the case in highly sensitive ocean environments such as coral reefs, for which the MIT team developed a robotic fish programmed to move about unnoticed with the same agility as native fish populations. Such non-intrusive probes may well prove transformative when it comes to gaining a better understanding the complex mechanisms of coral reef stress as a result of human-induced climate change and the resulting rise in ocean temperatures. Robotic fish are merely one expression of a broader research enterprise which has also employed such tools as Falcon drones, hovering unnoticed above the waters of the Peninsula Valdez in Argentina to collect data on the mating and birthing behaviors of Southern Right whales. Instead of having to rely upon imprecise, labor intensive methods of observation such as notebooks, binoculars, and cameras wielded from shore or intrusive boats, robots have the potential to take us where humanity should not or cannot go to begin to remedy the very damages industrialization has wrought.

Entangled in the circle of life

So what exactly does a call to “let robots be robots” imply?

It challenges us to grapple with actual plurality that stands behind the singular noun that Čapek coined one century ago. The robotic is a potentially infinite array of purposeful appliances whose very variety, from the standpoint of morphology, scale, operation, and function, parallels that of natural life forms even if it belongs to a separate order of things.

Second, this very plurality poses a challenge to the longstanding philosophical habit of approaching the robot as a way of thinking the human rather than as a method for thinking outside and alongside the human, as it were “in the cracks”—in the tangled, bidirectional, connective interstices between humanity and the world.

Third, and perhaps most significantly, to think outside and alongside the human implies a puncturing of the anthropocentrism that continues to shape much of contemporary discourse on technology. If as stated at the outset, “in the ample universe of machines, there is only one that routinely returns humanity’s own image in the mirror of history: only one that, whether crafted as a slave or toy, resurfaces to haunt humans as our equal, whether cast in the role of rival, replicant or replacement, and only one that infuses dreams of the superhuman,” then the time has come for that image to no longer be Vitruvian man, humanity at the center of the circle of being, humanity as model and master, including of the world’s mechanical and computational extensions. Rather that image needs to become one small vector within that vast circle of interdependent, interconnected forms that make up the Circle of Life… so that the robot may be about us to precisely the same degree as we ourselves are about the world.